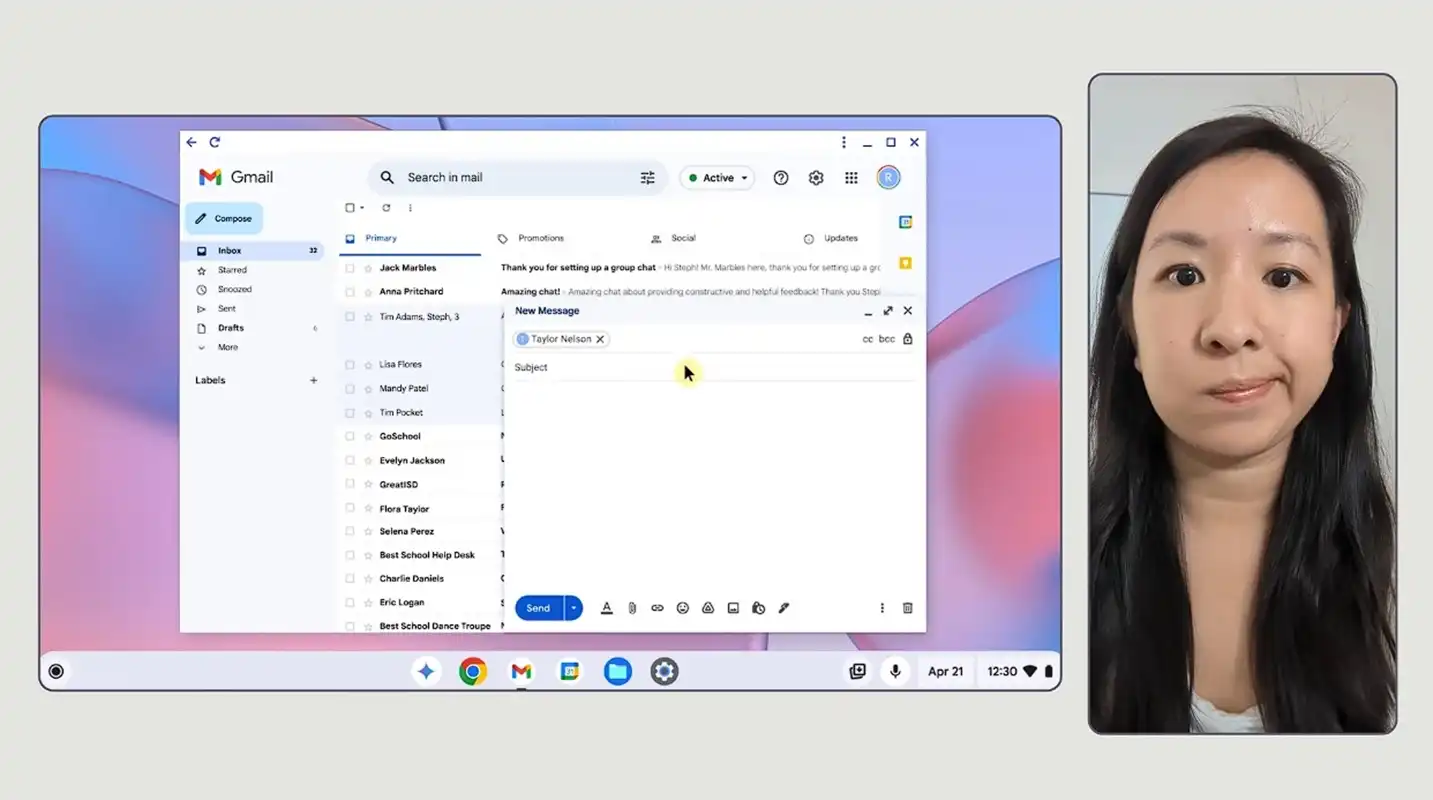

YouTube continues to stumble into controversy over its reliance on AI-driven tools, and its latest move is once again drawing criticism from both viewers and creators. Reports surfaced this week that the platform is quietly applying machine learning filters to enhance certain Shorts videos—without informing the creators who uploaded them. Users began noticing a strange, almost glossy look appearing on some clips, leading to speculation that YouTube was tinkering with videos post-upload. The platform later confirmed this experiment, sparking questions about transparency and control over creative work.

According to Rene Ritchie, YouTube’s head of editorial, the company is testing enhancements such as unblurring, denoising, and sharpening, similar to what modern smartphones do when recording. Ritchie emphasized that this wasn’t generative AI or upscaling, labeling it instead as “traditional machine learning technology.” While the distinction was no doubt meant to reassure users, it raises eyebrows. For one, the phrase feels like deliberate marketing spin at a time when public trust in anything labeled “AI” is at a low point. And second, the subtle nature of these edits makes them harder for users to detect—meaning creators are finding out about changes to their own work only after publication.

This approach puts YouTube in a precarious position. Enhancing old, grainy videos or improving compression artifacts is one thing; altering fine details like motion and skin textures without permission is another. Many viewers have already seen how over-applied smoothing filters on other platforms can strip authenticity from content, making it look uncanny or artificial. Now, with Google’s deep ties to AI development and its track record of aggressively pushing these technologies, it’s no surprise that many interpret even modest machine learning tweaks as another step toward AI-driven content manipulation.

Ultimately, the lack of transparency is the biggest misstep. Viewers and creators alike are already wary of subtle AI integrations in media, whether it’s generated text, images, or music. By quietly applying machine learning filters behind the scenes, YouTube risks eroding trust not just with its audience, but also with the very creators whose work keeps the platform alive. If creators can’t be certain their uploads will appear exactly as intended, they may begin to question whether YouTube is still the best place to share their content.